MAGF: Multi-scale Attention and Gated Fusion for Multi-modal Glaucoma Grading

Overview

Glaucoma is one of the leading causes of irreversible blindness worldwide. Clinical diagnosis typically relies on multiple imaging modalities, such as color fundus photography (CFP) and optical coherence tomography (OCT), which provide complementary structural information about the optic nerve head and retinal nerve fiber layer.

However, effectively integrating heterogeneous information from multiple modalities remains a challenging problem for automated glaucoma grading systems.

We propose MAGF, a multi-modal deep learning framework that integrates CFP and OCT volumetric scans through multi-scale attention and gated fusion mechanisms. By enhancing modality-specific representations and dynamically balancing cross-modal contributions, MAGF provides a robust solution for multi-modal glaucoma severity grading.

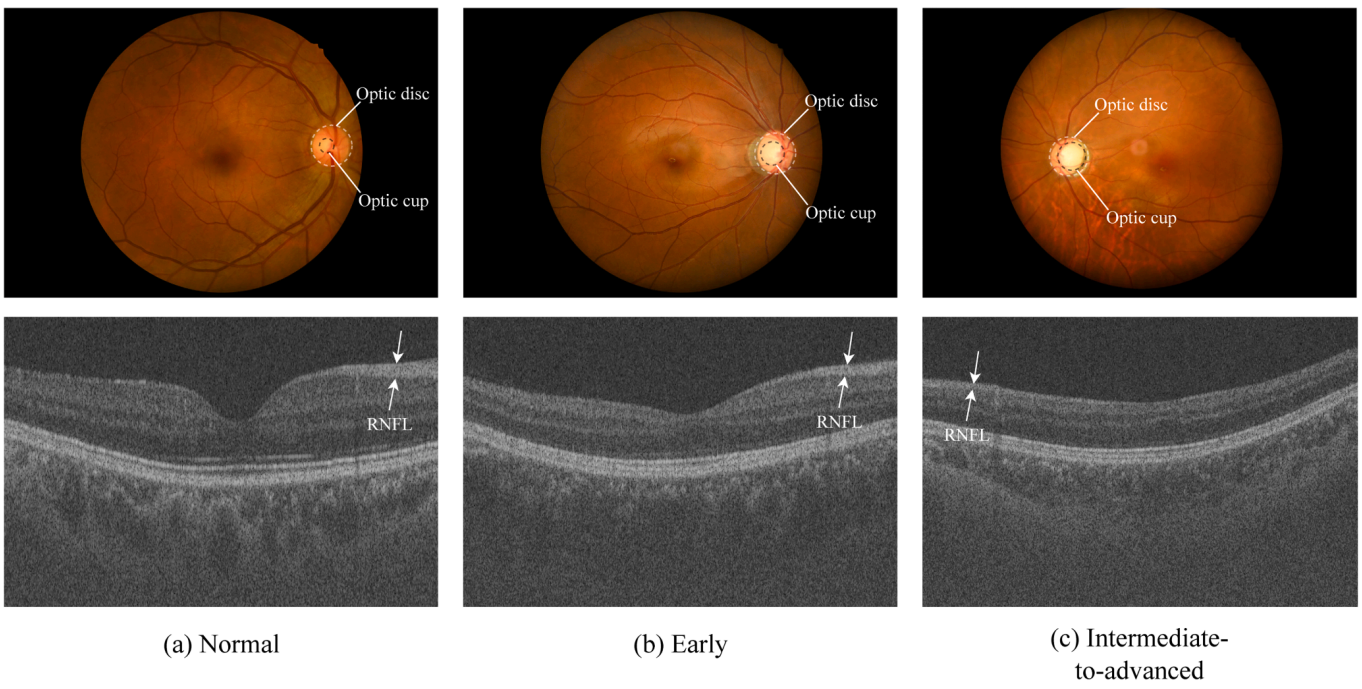

Dataset & Glaucoma Stages

The GAMMA dataset contains paired CFP and OCT images across three glaucoma severity stages. The following figure illustrates examples from each stage, highlighting key clinical indicators including optic cup-to-disc ratio and retinal nerve fiber layer (RNFL) thickness.

Highlights

- A multi-modal glaucoma grading framework integrating CFP and OCT volumetric scans.

- A Multi-scale Attention Fusion Module (MAFM) that enhances OCT structural feature representation.

- A Gated Fusion Module (GFM) that adaptively balances contributions from different modalities.

- Extensive evaluation on the GAMMA benchmark dataset demonstrates strong performance.

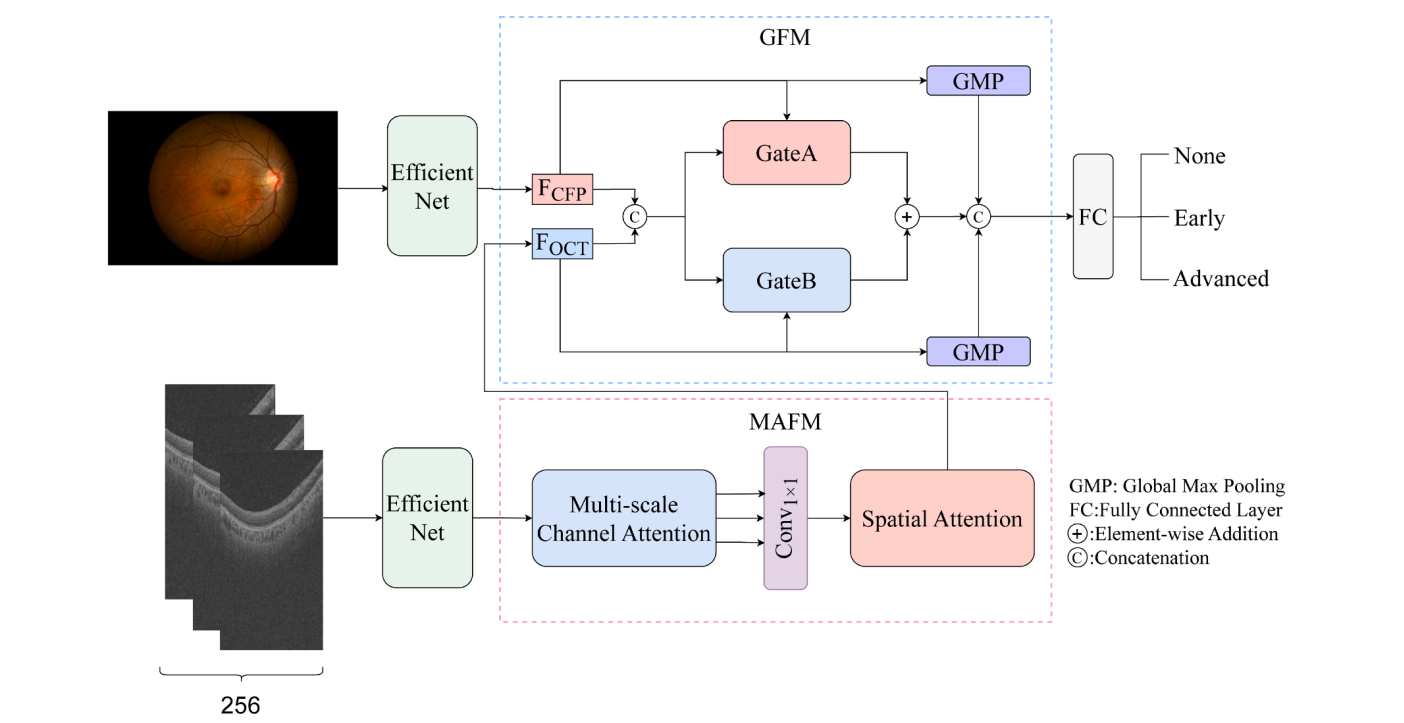

Method Overview

MAGF adopts a dual-branch architecture to extract features from color fundus photographs and OCT volumetric scans. The framework consists of three main components:

- Dual-branch Feature Extraction: Separate feature encoders are used to extract modality-specific representations from CFP and OCT images.

- Multi-scale Attention Fusion Module (MAFM): Applied to the OCT branch, MAFM captures structural patterns using multi-scale channel attention and spatial attention mechanisms.

- Gated Fusion Module (GFM): The GFM dynamically fuses features from CFP and OCT by learning adaptive gating weights that regulate the contribution of each modality.

Multi-scale Attention Fusion Module (MAFM)

OCT images contain rich structural information about the retinal layers, but their representations may vary across scales. The MAFM enhances OCT features through a combination of:

- Multi-scale channel attention, capturing patterns across different receptive fields (kernel sizes 3, 7, and 13)

- Spatial attention, emphasizing informative anatomical regions

This design allows the network to better capture structural variations relevant to glaucoma progression.

Gated Fusion Module (GFM)

Simply concatenating features from multiple modalities may lead to suboptimal performance because each modality contributes differently across cases. The GFM introduces an adaptive gating mechanism that dynamically balances the contributions of CFP and OCT features during feature fusion. By learning modality-specific weights, the model can:

- Emphasize the most informative modality

- Suppress redundant or noisy information

This adaptive fusion strategy improves the robustness of multi-modal glaucoma grading.

Results & Visualization

MAGF is evaluated on the GAMMA dataset, a public benchmark for multi-modal glaucoma grading that contains paired CFP and OCT images. Experimental results show that MAGF achieves strong performance compared with existing multi-modal methods, demonstrating the effectiveness of multi-scale attention and gated feature fusion.

Performance Comparison

| Method | Kappa |

|---|---|

| MAGF (Ours) | 0.9183 |

| ELF | 0.8960 |

| Mstnet | 0.8920 |

| GGUM | 0.8860 |

| ETSCL | 0.8844 |

| GAMMA Challenge Winner | 0.8649 |

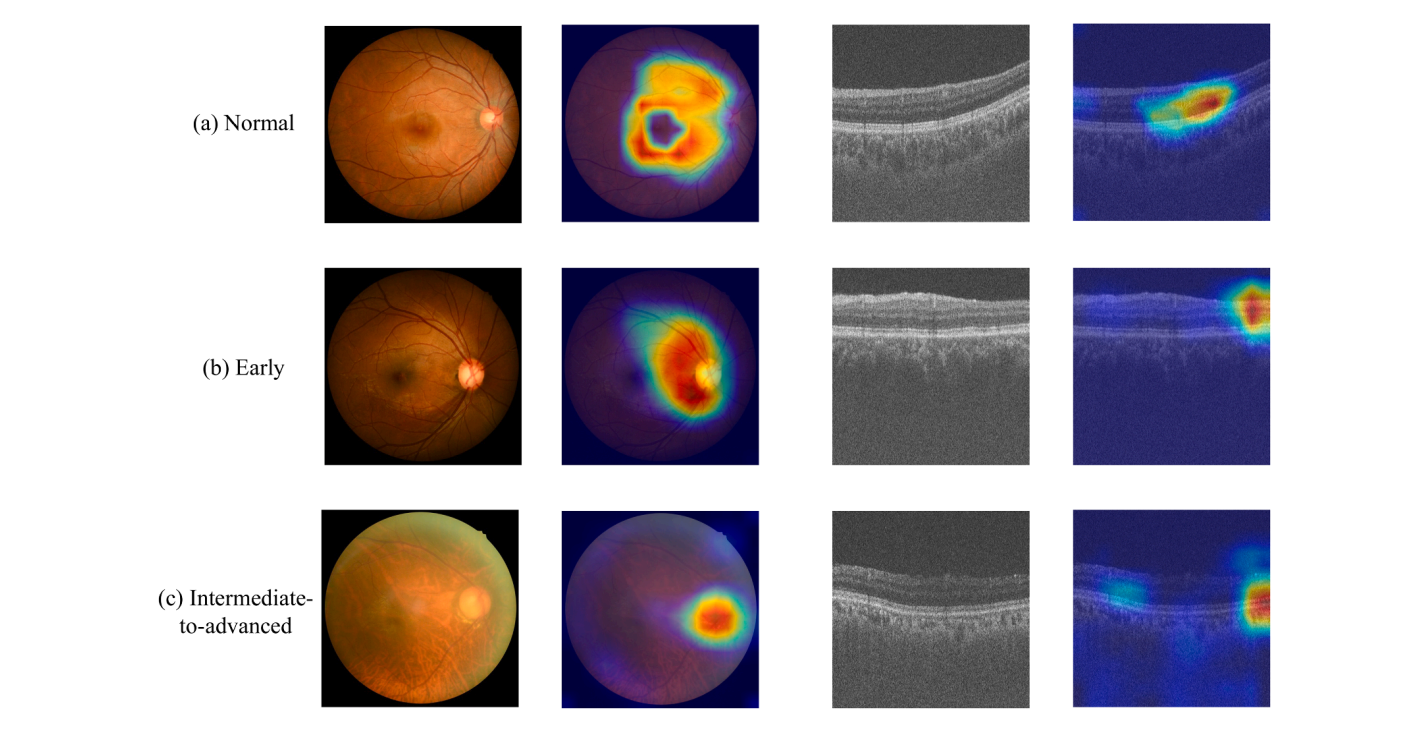

To better understand the model's behavior, Grad-CAM visualization is applied to highlight the regions that contribute most to the model's predictions:

The visualization results show that CFP attention maps focus on the optic disc and cup regions, while OCT attention maps emphasize retinal nerve fiber layer structures. These observations are consistent with clinical knowledge of glaucoma pathology.

Technical Details

Implementation

- Backbone: EfficientNet-B7 (ImageNet pretrained)

- Optimizer: Adam with learning rate 0.0001

- Loss Function: Cross-entropy loss

- Batch Size: 3

- Training Iterations: 1000

- Hardware: NVIDIA GeForce RTX 4090 GPU

Dataset

- GAMMA Dataset: 300 CFP-OCT paired samples from Zhongshan Ophthalmic Center

Dataset Link - Training: 200 samples

- Testing: 100 samples

- Classes: Non-glaucoma, Early-stage, Intermediate-to-advanced

Citation

@article{cheng2026magf,

title={MAGF: Multi-scale attention and gated fusion for multi-modal glaucoma grading},

author={Cheng, Haixi and Hong, Chaoqun and Zhang, Bo and Fang, Huihui and Xu, Yanwu and Yeo, Si Yong},

journal={Expert Systems With Applications},

volume={312},

pages={131388},

year={2026},

publisher={Elsevier},

doi={10.1016/j.eswa.2026.131388}

}

Published in Expert Systems With Applications 2026

Volume 312, Article 131388